Reading time:

12 min

The beginnings of circular intelligence

AI is entering a feedback loop: the more professionals use it, the smarter it gets - raising big questions about expertise, data, and control.

Article written by

Aaron Kirk

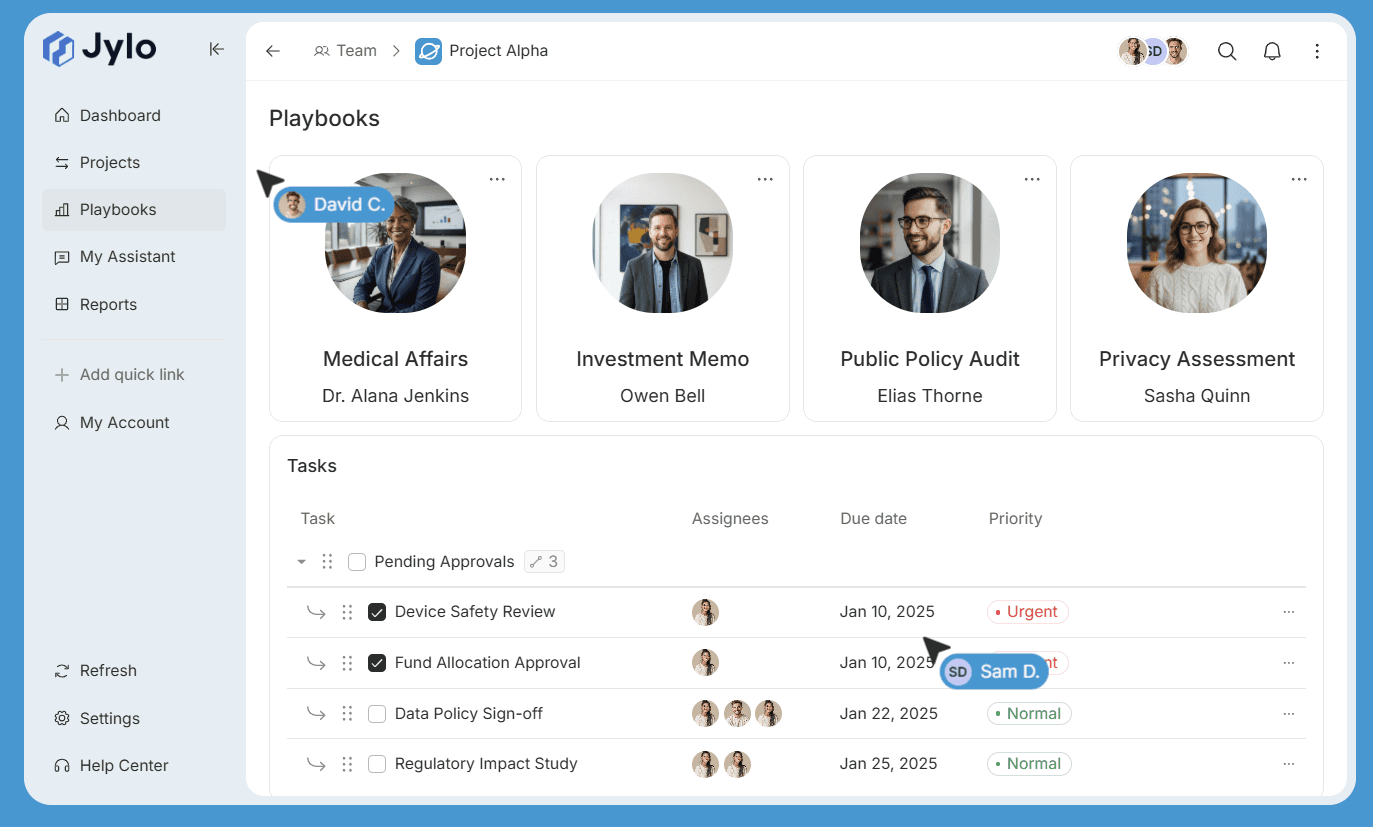

At Jylo, we’ve spent a lot of time thinking about how AI actually improves. Early on, we experimented with using models like OpenAI’s GPT-4o to automatically build legal playbooks in our platform. The idea sounded great in theory: let the model generate prompts and workflows on its own, then produce high-quality legal outputs. In practice, it didn’t work. The prompts the model wrote for itself - especially around complex legal concepts like, for example, locked-box mechanisms in Share Purchase Agreements (SPAs) - simply weren’t good enough. We ultimately pulled the feature and instead focused on helping customers craft their own prompts, where the results were consistently stronger and more reliable.

Recently, though, something interesting started happening. We released Anthropic’s Claude into Jylo and began testing its ability to generate prompts autonomously. Compared with GPT-4o and several other models, the difference was noticeable. Claude’s understanding of legal concepts and its ability to structure prompts appropriately seemed significantly improved. It looked less like a model guessing at legal workflows and more like one that had developed a deeper intuition about them.

Using different models, we asked each to "Write a prompt that reviews a Share Purchase Agreement purchase price mechanism." and we then had a lawyer assess the prompts.

Prompt | Assessment | Score (out of 10) |

gpt4o | This prompt is detailed and provides a comprehensive framework for reviewing the purchase price mechanism of a SPA, covering introduction, current mechanism, market analysis, financial performance, customer feedback, internal input, competitive advantage, recommendations, conclusion, action items, and appendices. However, it seems more geared towards a business's pricing strategy rather than a Share Purchase Agreement. | 5 |

gpt4.1 | Offers a concise and straightforward approach to reviewing the purchase price mechanism, focusing on structure, key terms, adjustments, risks, and best practices. It's useful but lacks the depth and specificity needed for a thorough analysis of an SPA. | 7 |

gpt5 | Provides an extremely detailed and comprehensive prompt for reviewing the purchase price mechanism in an SPA, covering all critical aspects from structure and adjustments to protections and covenants, with a focus on clarity, fairness, and commercial reasonableness. It includes specific questions to answer and requires a detailed output. | 9 |

Claude Sonnet 4.5 | Presents a structured approach to reviewing the purchase price mechanism, focusing on key areas such as base purchase price, adjustments, earn-out provisions, escrow arrangements, payment terms, risk assessment, and tax considerations. It requires a summary assessment, red flags, recommendations, and comparison to market standards. | 8 |

Claude Opus 4.6 | Offers a thorough and detailed prompt for reviewing the purchase price mechanism in an SPA, covering purchase price structure, locked box mechanism, completion accounts, earn-out provisions, price adjustments, definitions, risk allocation, and practical considerations. It requires a structured output with key findings, risk levels, and recommended actions. | 9 |

As highlighted, its clear the models are improving at prompt writing.

That raised an obvious question: where is this improvement coming from? It’s unlikely that the leap is purely the result of more research papers or publicly available contracts on the internet. Instead, our working theory is that the improvement reflects how people are using these systems. The thousands of lawyers, analysts, and professionals interacting with models - especially in consumer and enterprise settings - may be indirectly shaping how the models behave and improve over time.

We were reminded of this during a recent due diligence exercise on Anthropic through Microsoft. In the Enterprise terms, we noticed language suggesting that feedback from the user (e.g. great job, thanks but you missed something) could be used to train the model.

Data Handling: If you explicitly report feedback or bugs to us or otherwise choose to allow us to use your data, then we may use your chats and coding sessions to train our models.

That stood out because enterprise agreements are generally expected to guarantee strict isolation of customer data. At Jylo, we addressed this by hosting Anthropic models through Amazon Web Services, where customer inputs are not proxied back to the model provider. However, many organisations still operate on consumer-style or lightly governed enterprise terms without fully interrogating how feedback data is handled.

Anthropic loosely defines feedback elsewhere as interactions such as thumbs up or thumbs down signals, but we would strongly prefer to see “Feedback” explicitly defined in the contract itself as a clear, deterministic user action. Without that clarity, there is room for ambiguity around whether natural conversational responses such as “great job” or “you missed something” could also be interpreted as training signals - effectively creating RLHF-style feedback loops from professionals operating at enterprise scale. Given the intensity of competition between model providers, the strategic value of high-quality positive and negative signals, and the fact these clauses appear anywhere near enterprise agreements - where customers should never become the product - we believe this deserves much closer scrutiny.

If those feedback loops are indeed active, then it is entirely possible that the day-to-day work of professionals is quietly contributing to the training of the next generation of frontier models.

This is what we call circular intelligence. The traditional narrative has been that AI vendors train models using curated datasets, and customers simply consume the results. But what we may be seeing now is a loop: professionals use AI to solve complex problems, their interactions become signals, and those signals feed back into improving the model itself. Over time, the expertise of entire industries could gradually be absorbed into the systems they rely on.

There’s also an interesting competitive dynamic forming. Frontier model providers positioning themselves as enterprise-focused are seeing strong performance in enterprise tasks, which raises questions about how that knowledge enters the models in the first place. Meanwhile, models from OpenAI, connected to enterprise environments through Microsoft, may face limitations as strict enterprise data boundaries with Microsoft prevent that same feedback loop going to OpenAI. If true, it highlights a tension between customer trust, data governance, and the race to build the most capable models.

For white-collar professionals, this trend poses a deeper question. The more people rely on these systems, the better they become - and the more that shared intelligence becomes accessible to everyone else using them. Expertise that once provided a competitive advantage can gradually diffuse into the model layer itself. That’s the core tension of circular intelligence: the very act of using the system helps flatten the knowledge edge that made the user valuable in the first place.

Unlike manufacturing, where processes have been standardized for decades - producing the same goods over and over - knowledge work is fluid and constantly evolving. Right now, models have absorbed much of the world’s publicly available information, and professionals, who haven't made data sovereignty of their firm a priority, are in the honeymoon phase of layering new insights onto the frontier models through their interactions. But this phase is unlikely to last forever. As more knowledge moves behind paywalls on the internet or into private AI environments like Jylo, the flow of new expertise into frontier models will likely slow, raising important questions about how these systems will continue improving.

Our view at Jylo is that foundation models are increasingly becoming reasoning engines, not repositories of specialized expertise. The real value will sit in the context layer: the proprietary workflows, knowledge, and governance that organizations keep sovereign. In other words, the competitive advantage shouldn’t be poured into the model itself - it should live in the systems and intelligence built on top.

Circular intelligence has never been more visible than it is right now. But it’s worth approaching it with clear eyes. Models get better partly because we use them, which makes them more compelling, which drives even more usage. That loop can be both a benefit or a burden - depending on what side of the economic fence you're on. Organizations need to think carefully about where their knowledge flows.

Whilst we're clearly talking our book here, we believe it has never been more important to ensure your AI supply chain protects your expertise - rather than unintentionally feeding it into something that might one day compete with you.

Article written by

Aaron Kirk

AI that remains yours

Capture expertise and eliminate rework across your organisation.